The Current Pressures Firms Are Under

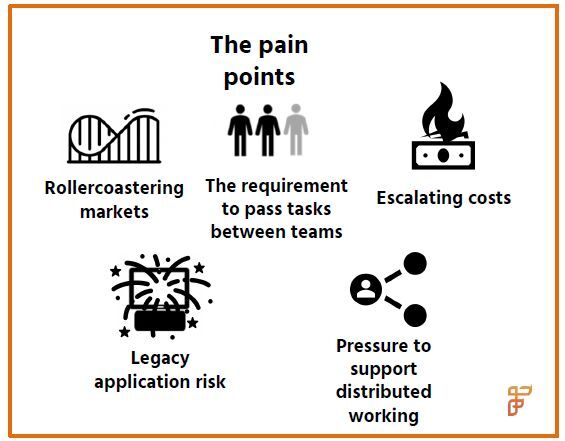

Financial institutions are facing challenges in an environment in which the meaning of business as usual has fundamentally changed. The COVID-19 crisis has thrown a spotlight on the inefficiencies underlying many functional areas of capital markets firms and caused C-suite executives to reevaluate operational resilience in light of increasing volumes and volatility, and the shift to a remote working environment.

The ongoing market volatility has caused significant increases in trading volume in spite of the market downturn overall, which has put pressure on front, middle and back office teams to keep up with the pace. Though the market is no stranger to volatility—it has witnessed numerous ups and downs since the global financial crisis of 2008—recent circumstances are entirely unique and on a global stage.

While previous crises were primarily economic in nature, the COVID-19 pandemic is a non-economic event that has impacted every market in slightly different ways. Both global and local firms have been affected and the industry at large has been compelled to work remotely at scale.

The geographic diversity of the capital markets has also played a part in creating unique challenges. For instance, remote working is more easily achieved in markets like the United States where workers have home computers or laptops, but is not so easy in countries where vast offshore centres are located, such as India, and laptops had to be shipped to support home working.

While previous crises saw markets experience serious operational and data challenges, no market has been shielded from the volatility effect; it has instead been magnified for global firms. This volatility has left many firms on the back foot in terms of assessing risk as markets move and asset class dynamics change.

For firms struggling with batch processes and end-of-day data delivery, business is being lost to newer, more agile competitors and risk assessments are slow to reflect the changing market dynamics. The industry has been struggling with the rising tide of data for some time and the current circumstances are increasing the pressure on firms to address their underlying data architecture’s weaknesses, to better position them for today’s challenges and those of the future.

Seamless access across a global firm’s multiple data silos is almost impossible without a real-time, consistent and secure data layer to bring the relevant information to the appropriate functional heads at the right time to make strategic decisions. This data access will also enable firms to better deal with the ongoing challenges of remote working and business continuity adaptations as requirements continue to evolve. Many middle and back office systems are struggling to cope with remote access requirements but with a harmonised data layer in place, many of these middle and back office functions will be much better supported.

The legacy technology environment is not conducive to passing work from one individual to another and there is a high degree of key person risk across all functions. Data isn’t accessible across systems and heads of business are struggling to garner an accurate picture of the market and the relevant opportunities presented by ongoing client and market developments. An end-of-day risk assessment is less relevant when markets are moving so swiftly and unpredictably, which means intraday margining and asset class valuation have been significant challenges.

Sections of the market that have, up until now, been reliant on in-person interactions for client business support, such as financial advisors, have also been struggling to adapt. Online and virtual client interactions are better supported with timely data insights and cloud-enabled technologies.

Capital markets firms have been slow to move to cloud-enabled or cloud-hosted environments and to transform processes from manual to digital in key areas of the trade lifecycle. The incompatibility between the next generation technology platforms that have been introduced and the legacy technologies that remain has exacerbated the data challenge. Sunsetting legacy applications takes a lot of time and effort, but firms shouldn’t be held back by these limitations. Although data silos will persist for the foreseeable future, investment in modern data management technologies such an enterprise data fabric can allow firms to stitch together distributed data from across the enterprise, as well as provide analytical capabilities and insights in real time.

In December 2019, the UK’s Financial Conduct Authority (FCA) published a consultation paper focused on highlighting the need for operational resilience improvements within financial institutions1. It is the first major global regulator to begin such a review, but it will certainly not be the last as 2020 is proving to be a perfect test case for regulators to assess how well the industry is prepared for a crisis.

Firms absolutely need to ensure they are able to stand up to this scrutiny and that of any other major industry regulator. Meeting service level agreements and minimising the risk of disruption to business as usual are therefore table stakes for capital markets firms as they emerge out of this crisis and into whatever the future brings. This means that risk management, stress testing capabilities and analytics are key to keeping off the regulatory radar—all of which requires strong data support.

Building a Framework for Change

All of these challenges are amounting to a huge operational risk headache for senior management teams and C-suite executives in the current environment. However, while testing, a crisis such as this shouldn’t go to waste. Firms can differentiate themselves from their peers in this tough market by focusing on key investments that will not only enable them to avoid operational risk pitfalls in the short-term but also stand them in good stead for long-term competitive growth and help them build the foundations of a digitally transformed business. The pains being experienced in the current crisis can be tackled by an honest assessment of technology capabilities and remedied by investment in key areas.

Technology has moved on a long way over the last decade and today, new technologies are available that can deliver accurate, real time, harmonized information from across the enterprise to business heads, on demand and in real time, to address many of these challenges. These technologies, including intelligent enterprise data fabrics, support firms to make better use of their existing data architectures by allowing their existing applications and data to remain in place, accessing, integrating, harmonizing and analysing the data in flight and on-demand to meet a variety of business initiatives. Moreover, these new technologies are able to scale out dynamically to accommodate increases in data volumes and workloads, as markets and volatility levels spike in times of crisis.

The creation of data lakes was a popular trend around five years ago and many data teams built these lakes with a view to gaining better business insights and building new data-focused services.

The reality, however, is that many of these data lakes—now sometimes referred to as "data swamps"—are rather murky. The data was fed into these architectures without enough forethought and it is now difficult to access and turn into useable information that can be deployed for tasks such as client insights or market intelligence.

For firms that have already created and populated data lakes, a common use case is to deploy a modern data fabric to sit between the business and the firm’s existing data lake to facilitate the translation of this data and its related metadata into functionally usable information. Another option for firms now coming to the table with a view to building such an architecture is to deploy a smart data lake—and it is the “smart” aspect that is all important in this endeavour. A smart data lake is also able to provide the all-important metadata layer that can translate the data into usable information in the same way a data fabric can be deployed.

A strategic review of the current technology environment across the front, middle and back office should focus on eliminating duplicative processes, increasing efficiency and include system retirement planning in the longer term. It should also entail building up data capabilities to enable front office and risk management to be on the front foot and capable of reacting swiftly to market changes. As remote working becomes more commonplace across the industry, firms need to focus on deploying technologies that are platform and cloud agnostic, and that support a more flexible, agile and scalable way of working in the future.

The majority of firms will eventually be compelled to replace their legacy technology platforms, but this process will take time and the interim stages will require data support to ensure that information contained in legacy systems and next generation systems is accessible and harmonised for business use. It is also unlikely that silos will be eliminated for good—functionally-specific applications and mergers and acquisitions are a fact of life for capital markets firms and these bring with them further data silos and integration challenges. A data fabric can eliminate the friction that exists for lines of business when attempting to access information from multiple systems and multiple functions.

The benefits to the business of investment in an intelligent, distributed, enterprise data fabric are manifold and relate to accelerating the pace of insights—both internal and external:

- Valuable insights can be delivered to the business and end clients via a consistent, integrated and harmonised data layer that can be accessed with high performance and at scale.

- The next generation of technology advancement must be built on strong data foundations. Artificial intelligence and machine learning technologies require a high volume of current, clean, normalised data from across the relevant silos of a business to function—a data fabric can deliver this data without requiring an entire structural rebuild of every enterprise data store.

- Firms can benefit from being able to respond to regulators’ ad hoc queries within a rapid timeframe and keep out of the compliance hot seat. Financial institutions cannot afford to take chances with their reputations as regulators continue to crack down on data quality underlying reporting, as well as examining firms’ operations and controls. A data fabric can deliver the flexibility and performance required to meet these obligations.

- Timelier risk data and analytics means firms can adapt in real time to market developments, which has benefits for both capital and liquidity management. Decision-making across internal functions is easier when firms have a more contemporary view of risk.

- Internal teams can benefit from seamless data access when passing tasks from one team member to another due to staffing shortages in the current environment. Key person risk can be reduced if all teams have permissioned access to required data from across the organisation.

- Data security is of paramount importance and working with a reliable partner in this space, rather than attempting to patch together multiple open-source tools, is a much safer option in the face of increased regulator and client scrutiny of cybersecurity and operational resilience.

The business can scale more efficiently and effectively due to the ability to handle any market-driven spikes in data and analytic workloads. The streamlining of data gathering processes also means reduced operational risk and increased operational resilience.

The Future Outlook

In the longer term, a data fabric fits into the goal of enabling digital transformation at the enterprise-level as data can be accessed from all corners of the business alongside the all-important metadata that enables data provenance to be established. Rather than struggling to understand the timeliness or accuracy of the various data attributes and put together an audit trail for clients, regulators or internal teams, a data fabric can allow access to the underlying source data and its lineage.

The data can be delivered in real time to internal and external parties—whether that’s additional insights for clients or risk information for front-office teams assessing the cost of a trade. As markets move, which is a frequent occurrence in the current market, risk management, collateral management and pricing teams can ensure they’re keeping up-to-the-minute on actions that need to be taken to maintain liquidity and reduce counterparty and market risk exposure.

Financial advisers can ensure they’re able to communicate the best options to their clients as they appear and fend off the competition from the newer entrants in the market. Wealth management firms can also benefit from more high-quality data to plug into simulations that can be run programmatically to enable the business to better identify opportunities and reduce risk.

Traders can rely on the data they receive from internal sources in real-time, rather than waiting for internal teams to validate and scrub information from the less liquid markets especially.

Faster data provision means greater support for decision-making and a more holistic approach to data across silos means firms have the data sets to benefit from next generation technology applications such as machine learning, artificial intelligence and natural language processing. These technologies are data hungry and the more information a firm can feed into such tools, the more accurate the outcome. Of course, the quality of the data that is fed into a machine-learning platform must also be high, which necessitates the provision of accurate and current data.

When assessing all of the options on the market, the hardware costs and architectural complexity of any solution need to be considered carefully. Architectures that require integrating multiple, disparate, standalone technologies—such as an in-memory data grid with a separate database management system for example—can incur high costs, increased hardware requirements, and development and maintenance complexity.

Today, options that provide a single architecture that combines multiple layers and capabilities—combining a horizontally scalable, enterprise data fabric with transactional and analytic database management capabilities and rich data and application integration functionality—can deliver these critical business benefits with a much simpler architecture, and much lower total cost of ownership for financial service firms.

Not only can the use of these new technologies help firms address the current pain points they are experiencing as a result of the current crisis, it can help them accelerate the move toward a digital future without a costly rip-and-replace of their current operational infrastructure.

Maintaining or gaining competitive edge is reliant on investment in robust data architecture, whether a firm is currently ahead of the curve or lagging behind. Those that fail to make these investments will continue to struggle, even when some semblance of normality returns to the markets.

The digital transformation of a firm is dependent on investment in its solid data foundations. When looking to the future, consider the difference these new, non-disruptive, enterprise-wide data technologies can make to your firm’s client interactions, strategic insights, risk management activities and regulatory compliance, delivering critical data, insights, and actions to your key stakeholders inside and outside the company, on demand and in real time.

Firebrand Research

We’re passionate about capital markets research Our expertise is in providing research and advisory services to firms across the capital markets spectrum. From fintech investments to business case building, we have the skills to help you get the job done.

- The voice of the market

- Independent

- Built on decades of research

- Practical not posturing

- Diversity of approach

- Market research should be accessible

For more information visit www.fintechfirebrand.com or email contact@fintechfirebrand.com

InterSystems

Established in 1978, InterSystems is the leading provider of technology for critical data initiatives in the healthcare, finance, manufacturing and supply chain sectors, including production applications at most of the top global banks. Its cloud-first data platforms solve interoperability, speed, and scalability problems for large organizations around the globe. InterSystems is committed to excellence through its award-winning, 24×7 support for customers and partners in more than 80 countries. Privately held and headquartered in Cambridge, Massachusetts, InterSystems has 25 offices worldwide.

For more information, please visit InterSystems.com/Financial

1 “Building operational resilience: impact tolerances for important business services”, FCA, December 2019